mirror of

https://github.com/mountain-loop/yaak.git

synced 2026-02-12 05:37:44 +01:00

Compare commits

24 Commits

v2026.2.0-

...

main

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

1127d7e3fa | ||

|

|

7d4d228236 | ||

|

|

565e053ee8 | ||

|

|

26aba6034f | ||

|

|

9a1d613034 | ||

|

|

3e4de7d3c4 | ||

|

|

b64b5ec0f8 | ||

|

|

510d1c7d17 | ||

|

|

ed13a62269 | ||

|

|

935d613959 | ||

|

|

adeaaccc45 | ||

|

|

d253093333 | ||

|

|

f265b7a572 | ||

|

|

68b2ff016f | ||

|

|

a1c6295810 | ||

|

|

76ee3fa61b | ||

|

|

7fef35ce0a | ||

|

|

654af09951 | ||

|

|

484dcfade0 | ||

|

|

fda18c5434 | ||

|

|

a8176d6e9e | ||

|

|

957d8d9d46 | ||

|

|

5f18bf25e2 | ||

|

|

66942eaf2c |

@@ -43,5 +43,7 @@ The skill generates markdown-formatted release notes following this structure:

|

||||

After outputting the release notes, ask the user if they would like to create a draft GitHub release with these notes. If they confirm, create the release using:

|

||||

|

||||

```bash

|

||||

gh release create <tag> --draft --prerelease --title "<tag>" --notes '<release notes>'

|

||||

gh release create <tag> --draft --prerelease --title "Release <version>" --notes '<release notes>'

|

||||

```

|

||||

|

||||

**IMPORTANT**: The release title format is "Release XXXX" where XXXX is the version WITHOUT the `v` prefix. For example, tag `v2026.2.1-beta.1` gets title "Release 2026.2.1-beta.1".

|

||||

|

||||

52

.github/workflows/flathub.yml

vendored

Normal file

52

.github/workflows/flathub.yml

vendored

Normal file

@@ -0,0 +1,52 @@

|

||||

name: Update Flathub

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

update-flathub:

|

||||

name: Update Flathub manifest

|

||||

runs-on: ubuntu-latest

|

||||

# Only run for stable releases (skip betas/pre-releases)

|

||||

if: ${{ !github.event.release.prerelease }}

|

||||

steps:

|

||||

- name: Checkout app repo

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Checkout Flathub repo

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

repository: flathub/app.yaak.Yaak

|

||||

token: ${{ secrets.FLATHUB_TOKEN }}

|

||||

path: flathub-repo

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version: "3.12"

|

||||

|

||||

- name: Set up Node.js

|

||||

uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version: "22"

|

||||

|

||||

- name: Install source generators

|

||||

run: |

|

||||

pip install flatpak-node-generator tomlkit aiohttp

|

||||

git clone --depth 1 https://github.com/flatpak/flatpak-builder-tools flatpak/flatpak-builder-tools

|

||||

|

||||

- name: Run update-manifest.sh

|

||||

run: bash flatpak/update-manifest.sh "${{ github.event.release.tag_name }}" flathub-repo

|

||||

|

||||

- name: Commit and push to Flathub

|

||||

working-directory: flathub-repo

|

||||

run: |

|

||||

git config user.name "github-actions[bot]"

|

||||

git config user.email "github-actions[bot]@users.noreply.github.com"

|

||||

git add -A

|

||||

git diff --cached --quiet && echo "No changes to commit" && exit 0

|

||||

git commit -m "Update to ${{ github.event.release.tag_name }}"

|

||||

git push

|

||||

7

.gitignore

vendored

7

.gitignore

vendored

@@ -44,3 +44,10 @@ crates-tauri/yaak-app/tauri.worktree.conf.json

|

||||

# Tauri auto-generated permission files

|

||||

**/permissions/autogenerated

|

||||

**/permissions/schemas

|

||||

|

||||

# Flatpak build artifacts

|

||||

flatpak-repo/

|

||||

.flatpak-builder/

|

||||

flatpak/flatpak-builder-tools/

|

||||

flatpak/cargo-sources.json

|

||||

flatpak/node-sources.json

|

||||

|

||||

1

Cargo.lock

generated

1

Cargo.lock

generated

@@ -8167,6 +8167,7 @@ dependencies = [

|

||||

"cookie",

|

||||

"flate2",

|

||||

"futures-util",

|

||||

"http-body",

|

||||

"hyper-util",

|

||||

"log",

|

||||

"mime_guess",

|

||||

|

||||

@@ -414,7 +414,7 @@ async fn execute_transaction<R: Runtime>(

|

||||

sendable_request.body = Some(SendableBody::Bytes(bytes));

|

||||

None

|

||||

}

|

||||

Some(SendableBody::Stream(stream)) => {

|

||||

Some(SendableBody::Stream { data: stream, content_length }) => {

|

||||

// Wrap stream with TeeReader to capture data as it's read

|

||||

// Use unbounded channel to ensure all data is captured without blocking the HTTP request

|

||||

let (body_chunk_tx, body_chunk_rx) = tokio::sync::mpsc::unbounded_channel::<Vec<u8>>();

|

||||

@@ -448,7 +448,7 @@ async fn execute_transaction<R: Runtime>(

|

||||

None

|

||||

};

|

||||

|

||||

sendable_request.body = Some(SendableBody::Stream(pinned));

|

||||

sendable_request.body = Some(SendableBody::Stream { data: pinned, content_length });

|

||||

handle

|

||||

}

|

||||

None => {

|

||||

|

||||

@@ -1095,8 +1095,13 @@ async fn cmd_get_http_authentication_config<R: Runtime>(

|

||||

|

||||

// Convert HashMap<String, JsonPrimitive> to serde_json::Value for rendering

|

||||

let values_json: serde_json::Value = serde_json::to_value(&values)?;

|

||||

let rendered_json =

|

||||

render_json_value(values_json, environment_chain, &cb, &RenderOptions::throw()).await?;

|

||||

let rendered_json = render_json_value(

|

||||

values_json,

|

||||

environment_chain,

|

||||

&cb,

|

||||

&RenderOptions::return_empty(),

|

||||

)

|

||||

.await?;

|

||||

|

||||

// Convert back to HashMap<String, JsonPrimitive>

|

||||

let rendered_values: HashMap<String, JsonPrimitive> = serde_json::from_value(rendered_json)?;

|

||||

|

||||

@@ -38,6 +38,9 @@ pub async fn render_grpc_request<T: TemplateCallback>(

|

||||

|

||||

let mut metadata = Vec::new();

|

||||

for p in r.metadata.clone() {

|

||||

if !p.enabled {

|

||||

continue;

|

||||

}

|

||||

metadata.push(HttpRequestHeader {

|

||||

enabled: p.enabled,

|

||||

name: parse_and_render(p.name.as_str(), vars, cb, &opt).await?,

|

||||

@@ -119,6 +122,7 @@ pub async fn render_http_request<T: TemplateCallback>(

|

||||

|

||||

let mut body = BTreeMap::new();

|

||||

for (k, v) in r.body.clone() {

|

||||

let v = if k == "form" { strip_disabled_form_entries(v) } else { v };

|

||||

body.insert(k, render_json_value_raw(v, vars, cb, &opt).await?);

|

||||

}

|

||||

|

||||

@@ -161,3 +165,71 @@ pub async fn render_http_request<T: TemplateCallback>(

|

||||

|

||||

Ok(HttpRequest { url, url_parameters, headers, body, authentication, ..r.to_owned() })

|

||||

}

|

||||

|

||||

/// Strip disabled entries from a JSON array of form objects.

|

||||

fn strip_disabled_form_entries(v: Value) -> Value {

|

||||

match v {

|

||||

Value::Array(items) => Value::Array(

|

||||

items

|

||||

.into_iter()

|

||||

.filter(|item| item.get("enabled").and_then(|e| e.as_bool()).unwrap_or(true))

|

||||

.collect(),

|

||||

),

|

||||

v => v,

|

||||

}

|

||||

}

|

||||

|

||||

#[cfg(test)]

|

||||

mod tests {

|

||||

use super::*;

|

||||

use serde_json::json;

|

||||

|

||||

#[test]

|

||||

fn test_strip_disabled_form_entries() {

|

||||

let input = json!([

|

||||

{"enabled": true, "name": "foo", "value": "bar"},

|

||||

{"enabled": false, "name": "disabled", "value": "gone"},

|

||||

{"enabled": true, "name": "baz", "value": "qux"},

|

||||

]);

|

||||

let result = strip_disabled_form_entries(input);

|

||||

assert_eq!(

|

||||

result,

|

||||

json!([

|

||||

{"enabled": true, "name": "foo", "value": "bar"},

|

||||

{"enabled": true, "name": "baz", "value": "qux"},

|

||||

])

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_strip_disabled_form_entries_all_disabled() {

|

||||

let input = json!([

|

||||

{"enabled": false, "name": "a", "value": "b"},

|

||||

{"enabled": false, "name": "c", "value": "d"},

|

||||

]);

|

||||

let result = strip_disabled_form_entries(input);

|

||||

assert_eq!(result, json!([]));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_strip_disabled_form_entries_missing_enabled_defaults_to_kept() {

|

||||

let input = json!([

|

||||

{"name": "no_enabled_field", "value": "kept"},

|

||||

{"enabled": false, "name": "disabled", "value": "gone"},

|

||||

]);

|

||||

let result = strip_disabled_form_entries(input);

|

||||

assert_eq!(

|

||||

result,

|

||||

json!([

|

||||

{"name": "no_enabled_field", "value": "kept"},

|

||||

])

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_strip_disabled_form_entries_non_array_passthrough() {

|

||||

let input = json!("just a string");

|

||||

let result = strip_disabled_form_entries(input.clone());

|

||||

assert_eq!(result, input);

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,9 +1,6 @@

|

||||

{

|

||||

"build": {

|

||||

"features": [

|

||||

"updater",

|

||||

"license"

|

||||

]

|

||||

"features": ["updater", "license"]

|

||||

},

|

||||

"app": {

|

||||

"security": {

|

||||

@@ -11,12 +8,8 @@

|

||||

"default",

|

||||

{

|

||||

"identifier": "release",

|

||||

"windows": [

|

||||

"*"

|

||||

],

|

||||

"permissions": [

|

||||

"yaak-license:default"

|

||||

]

|

||||

"windows": ["*"],

|

||||

"permissions": ["yaak-license:default"]

|

||||

}

|

||||

]

|

||||

}

|

||||

@@ -39,14 +32,7 @@

|

||||

"createUpdaterArtifacts": true,

|

||||

"longDescription": "A cross-platform desktop app for interacting with REST, GraphQL, and gRPC",

|

||||

"shortDescription": "Play with APIs, intuitively",

|

||||

"targets": [

|

||||

"app",

|

||||

"appimage",

|

||||

"deb",

|

||||

"dmg",

|

||||

"nsis",

|

||||

"rpm"

|

||||

],

|

||||

"targets": ["app", "appimage", "deb", "dmg", "nsis", "rpm"],

|

||||

"macOS": {

|

||||

"minimumSystemVersion": "13.0",

|

||||

"exceptionDomain": "",

|

||||

@@ -58,10 +44,16 @@

|

||||

},

|

||||

"linux": {

|

||||

"deb": {

|

||||

"desktopTemplate": "./template.desktop"

|

||||

"desktopTemplate": "./template.desktop",

|

||||

"files": {

|

||||

"/usr/share/metainfo/app.yaak.Yaak.metainfo.xml": "../../flatpak/app.yaak.Yaak.metainfo.xml"

|

||||

}

|

||||

},

|

||||

"rpm": {

|

||||

"desktopTemplate": "./template.desktop"

|

||||

"desktopTemplate": "./template.desktop",

|

||||

"files": {

|

||||

"/usr/share/metainfo/app.yaak.Yaak.metainfo.xml": "../../flatpak/app.yaak.Yaak.metainfo.xml"

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -32,22 +32,30 @@ export interface GitCallbacks {

|

||||

|

||||

const onSuccess = () => queryClient.invalidateQueries({ queryKey: ['git'] });

|

||||

|

||||

export function useGit(dir: string, callbacks: GitCallbacks) {

|

||||

export function useGit(dir: string, callbacks: GitCallbacks, refreshKey?: string) {

|

||||

const mutations = useMemo(() => gitMutations(dir, callbacks), [dir, callbacks]);

|

||||

const fetchAll = useQuery<void, string>({

|

||||

queryKey: ['git', 'fetch_all', dir, refreshKey],

|

||||

queryFn: () => invoke('cmd_git_fetch_all', { dir }),

|

||||

refetchInterval: 10 * 60_000,

|

||||

});

|

||||

return [

|

||||

{

|

||||

remotes: useQuery<GitRemote[], string>({

|

||||

queryKey: ['git', 'remotes', dir],

|

||||

queryKey: ['git', 'remotes', dir, refreshKey],

|

||||

queryFn: () => getRemotes(dir),

|

||||

placeholderData: (prev) => prev,

|

||||

}),

|

||||

log: useQuery<GitCommit[], string>({

|

||||

queryKey: ['git', 'log', dir],

|

||||

queryKey: ['git', 'log', dir, refreshKey],

|

||||

queryFn: () => invoke('cmd_git_log', { dir }),

|

||||

placeholderData: (prev) => prev,

|

||||

}),

|

||||

status: useQuery<GitStatusSummary, string>({

|

||||

refetchOnMount: true,

|

||||

queryKey: ['git', 'status', dir],

|

||||

queryKey: ['git', 'status', dir, refreshKey, fetchAll.dataUpdatedAt],

|

||||

queryFn: () => invoke('cmd_git_status', { dir }),

|

||||

placeholderData: (prev) => prev,

|

||||

}),

|

||||

},

|

||||

mutations,

|

||||

@@ -152,10 +160,7 @@ export const gitMutations = (dir: string, callbacks: GitCallbacks) => {

|

||||

},

|

||||

onSuccess,

|

||||

}),

|

||||

fetchAll: createFastMutation<void, string, void>({

|

||||

mutationKey: ['git', 'fetch_all', dir],

|

||||

mutationFn: () => invoke('cmd_git_fetch_all', { dir }),

|

||||

}),

|

||||

|

||||

push: createFastMutation<PushResult, string, void>({

|

||||

mutationKey: ['git', 'push', dir],

|

||||

mutationFn: push,

|

||||

|

||||

@@ -12,6 +12,7 @@ bytes = "1.11.1"

|

||||

cookie = "0.18.1"

|

||||

flate2 = "1"

|

||||

futures-util = "0.3"

|

||||

http-body = "1"

|

||||

url = "2"

|

||||

zstd = "0.13"

|

||||

hyper-util = { version = "0.1.17", default-features = false, features = ["client-legacy"] }

|

||||

|

||||

@@ -2,7 +2,9 @@ use crate::decompress::{ContentEncoding, streaming_decoder};

|

||||

use crate::error::{Error, Result};

|

||||

use crate::types::{SendableBody, SendableHttpRequest};

|

||||

use async_trait::async_trait;

|

||||

use bytes::Bytes;

|

||||

use futures_util::StreamExt;

|

||||

use http_body::{Body as HttpBody, Frame, SizeHint};

|

||||

use reqwest::{Client, Method, Version};

|

||||

use std::fmt::Display;

|

||||

use std::pin::Pin;

|

||||

@@ -413,10 +415,16 @@ impl HttpSender for ReqwestSender {

|

||||

Some(SendableBody::Bytes(bytes)) => {

|

||||

req_builder = req_builder.body(bytes);

|

||||

}

|

||||

Some(SendableBody::Stream(stream)) => {

|

||||

// Convert AsyncRead stream to reqwest Body

|

||||

let stream = tokio_util::io::ReaderStream::new(stream);

|

||||

let body = reqwest::Body::wrap_stream(stream);

|

||||

Some(SendableBody::Stream { data, content_length }) => {

|

||||

// Convert AsyncRead stream to reqwest Body. If content length is

|

||||

// known, wrap with a SizedBody so hyper can set Content-Length

|

||||

// automatically (for both HTTP/1.1 and HTTP/2).

|

||||

let stream = tokio_util::io::ReaderStream::new(data);

|

||||

let body = if let Some(len) = content_length {

|

||||

reqwest::Body::wrap(SizedBody::new(stream, len))

|

||||

} else {

|

||||

reqwest::Body::wrap_stream(stream)

|

||||

};

|

||||

req_builder = req_builder.body(body);

|

||||

}

|

||||

}

|

||||

@@ -520,6 +528,51 @@ impl HttpSender for ReqwestSender {

|

||||

}

|

||||

}

|

||||

|

||||

/// A wrapper around a byte stream that reports a known content length via

|

||||

/// `size_hint()`. This lets hyper set the `Content-Length` header

|

||||

/// automatically based on the body size, without us having to add it as an

|

||||

/// explicit header — which can cause duplicate `Content-Length` headers and

|

||||

/// break HTTP/2.

|

||||

struct SizedBody<S> {

|

||||

stream: std::sync::Mutex<S>,

|

||||

remaining: u64,

|

||||

}

|

||||

|

||||

impl<S> SizedBody<S> {

|

||||

fn new(stream: S, content_length: u64) -> Self {

|

||||

Self { stream: std::sync::Mutex::new(stream), remaining: content_length }

|

||||

}

|

||||

}

|

||||

|

||||

impl<S> HttpBody for SizedBody<S>

|

||||

where

|

||||

S: futures_util::Stream<Item = std::result::Result<Bytes, std::io::Error>> + Send + Unpin + 'static,

|

||||

{

|

||||

type Data = Bytes;

|

||||

type Error = std::io::Error;

|

||||

|

||||

fn poll_frame(

|

||||

self: Pin<&mut Self>,

|

||||

cx: &mut Context<'_>,

|

||||

) -> Poll<Option<std::result::Result<Frame<Self::Data>, Self::Error>>> {

|

||||

let this = self.get_mut();

|

||||

let mut stream = this.stream.lock().unwrap();

|

||||

match stream.poll_next_unpin(cx) {

|

||||

Poll::Ready(Some(Ok(chunk))) => {

|

||||

this.remaining = this.remaining.saturating_sub(chunk.len() as u64);

|

||||

Poll::Ready(Some(Ok(Frame::data(chunk))))

|

||||

}

|

||||

Poll::Ready(Some(Err(e))) => Poll::Ready(Some(Err(e))),

|

||||

Poll::Ready(None) => Poll::Ready(None),

|

||||

Poll::Pending => Poll::Pending,

|

||||

}

|

||||

}

|

||||

|

||||

fn size_hint(&self) -> SizeHint {

|

||||

SizeHint::with_exact(self.remaining)

|

||||

}

|

||||

}

|

||||

|

||||

fn version_to_str(version: &Version) -> String {

|

||||

match *version {

|

||||

Version::HTTP_09 => "HTTP/0.9".to_string(),

|

||||

|

||||

@@ -16,7 +16,13 @@ pub(crate) const MULTIPART_BOUNDARY: &str = "------YaakFormBoundary";

|

||||

|

||||

pub enum SendableBody {

|

||||

Bytes(Bytes),

|

||||

Stream(Pin<Box<dyn AsyncRead + Send + 'static>>),

|

||||

Stream {

|

||||

data: Pin<Box<dyn AsyncRead + Send + 'static>>,

|

||||

/// Known content length for the stream, if available. This is used by

|

||||

/// the sender to set the body size hint so that hyper can set

|

||||

/// Content-Length automatically for both HTTP/1.1 and HTTP/2.

|

||||

content_length: Option<u64>,

|

||||

},

|

||||

}

|

||||

|

||||

enum SendableBodyWithMeta {

|

||||

@@ -31,7 +37,10 @@ impl From<SendableBodyWithMeta> for SendableBody {

|

||||

fn from(value: SendableBodyWithMeta) -> Self {

|

||||

match value {

|

||||

SendableBodyWithMeta::Bytes(b) => SendableBody::Bytes(b),

|

||||

SendableBodyWithMeta::Stream { data, .. } => SendableBody::Stream(data),

|

||||

SendableBodyWithMeta::Stream { data, content_length } => SendableBody::Stream {

|

||||

data,

|

||||

content_length: content_length.map(|l| l as u64),

|

||||

},

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -186,23 +195,11 @@ async fn build_body(

|

||||

}

|

||||

}

|

||||

|

||||

// Check if Transfer-Encoding: chunked is already set

|

||||

let has_chunked_encoding = headers.iter().any(|h| {

|

||||

h.0.to_lowercase() == "transfer-encoding" && h.1.to_lowercase().contains("chunked")

|

||||

});

|

||||

|

||||

// Add a Content-Length header only if chunked encoding is not being used

|

||||

if !has_chunked_encoding {

|

||||

let content_length = match body {

|

||||

Some(SendableBodyWithMeta::Bytes(ref bytes)) => Some(bytes.len()),

|

||||

Some(SendableBodyWithMeta::Stream { content_length, .. }) => content_length,

|

||||

None => None,

|

||||

};

|

||||

|

||||

if let Some(cl) = content_length {

|

||||

headers.push(("Content-Length".to_string(), cl.to_string()));

|

||||

}

|

||||

}

|

||||

// NOTE: Content-Length is NOT set as an explicit header here. Instead, the

|

||||

// body's content length is carried via SendableBody::Stream { content_length }

|

||||

// and used by the sender to set the body size hint. This lets hyper handle

|

||||

// Content-Length automatically for both HTTP/1.1 and HTTP/2, avoiding the

|

||||

// duplicate Content-Length that breaks HTTP/2 servers.

|

||||

|

||||

Ok((body.map(|b| b.into()), headers))

|

||||

}

|

||||

@@ -928,7 +925,27 @@ mod tests {

|

||||

}

|

||||

|

||||

#[tokio::test]

|

||||

async fn test_no_content_length_with_chunked_encoding() -> Result<()> {

|

||||

async fn test_no_content_length_header_added_by_build_body() -> Result<()> {

|

||||

let mut body = BTreeMap::new();

|

||||

body.insert("text".to_string(), json!("Hello, World!"));

|

||||

|

||||

let headers = vec![];

|

||||

|

||||

let (_, result_headers) =

|

||||

build_body("POST", &Some("text/plain".to_string()), &body, headers).await?;

|

||||

|

||||

// Content-Length should NOT be set as an explicit header. Instead, the

|

||||

// sender uses the body's size_hint to let hyper set it automatically,

|

||||

// which works correctly for both HTTP/1.1 and HTTP/2.

|

||||

let has_content_length =

|

||||

result_headers.iter().any(|h| h.0.to_lowercase() == "content-length");

|

||||

assert!(!has_content_length, "Content-Length should not be set as an explicit header");

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

#[tokio::test]

|

||||

async fn test_chunked_encoding_header_preserved() -> Result<()> {

|

||||

let mut body = BTreeMap::new();

|

||||

body.insert("text".to_string(), json!("Hello, World!"));

|

||||

|

||||

@@ -938,11 +955,6 @@ mod tests {

|

||||

let (_, result_headers) =

|

||||

build_body("POST", &Some("text/plain".to_string()), &body, headers).await?;

|

||||

|

||||

// Verify that Content-Length is NOT present when Transfer-Encoding: chunked is set

|

||||

let has_content_length =

|

||||

result_headers.iter().any(|h| h.0.to_lowercase() == "content-length");

|

||||

assert!(!has_content_length, "Content-Length should not be present with chunked encoding");

|

||||

|

||||

// Verify that the Transfer-Encoding header is still present

|

||||

let has_chunked = result_headers.iter().any(|h| {

|

||||

h.0.to_lowercase() == "transfer-encoding" && h.1.to_lowercase().contains("chunked")

|

||||

@@ -951,31 +963,4 @@ mod tests {

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

#[tokio::test]

|

||||

async fn test_content_length_without_chunked_encoding() -> Result<()> {

|

||||

let mut body = BTreeMap::new();

|

||||

body.insert("text".to_string(), json!("Hello, World!"));

|

||||

|

||||

// Headers without Transfer-Encoding: chunked

|

||||

let headers = vec![];

|

||||

|

||||

let (_, result_headers) =

|

||||

build_body("POST", &Some("text/plain".to_string()), &body, headers).await?;

|

||||

|

||||

// Verify that Content-Length IS present when Transfer-Encoding: chunked is NOT set

|

||||

let content_length_header =

|

||||

result_headers.iter().find(|h| h.0.to_lowercase() == "content-length");

|

||||

assert!(

|

||||

content_length_header.is_some(),

|

||||

"Content-Length should be present without chunked encoding"

|

||||

);

|

||||

assert_eq!(

|

||||

content_length_header.unwrap().1,

|

||||

"13",

|

||||

"Content-Length should match the body size"

|

||||

);

|

||||

|

||||

Ok(())

|

||||

}

|

||||

}

|

||||

|

||||

8

crates/yaak-templates/build-wasm.cjs

Normal file

8

crates/yaak-templates/build-wasm.cjs

Normal file

@@ -0,0 +1,8 @@

|

||||

const { execSync } = require('node:child_process');

|

||||

|

||||

if (process.env.SKIP_WASM_BUILD === '1') {

|

||||

console.log('Skipping wasm-pack build (SKIP_WASM_BUILD=1)');

|

||||

return;

|

||||

}

|

||||

|

||||

execSync('wasm-pack build --target bundler', { stdio: 'inherit' });

|

||||

@@ -6,7 +6,7 @@

|

||||

"scripts": {

|

||||

"bootstrap": "npm run build",

|

||||

"build": "run-s build:*",

|

||||

"build:pack": "wasm-pack build --target bundler",

|

||||

"build:pack": "node build-wasm.cjs",

|

||||

"build:clean": "rimraf ./pkg/.gitignore"

|

||||

},

|

||||

"devDependencies": {

|

||||

|

||||

@@ -81,6 +81,10 @@ impl RenderOptions {

|

||||

pub fn throw() -> Self {

|

||||

Self { error_behavior: RenderErrorBehavior::Throw }

|

||||

}

|

||||

|

||||

pub fn return_empty() -> Self {

|

||||

Self { error_behavior: RenderErrorBehavior::ReturnEmpty }

|

||||

}

|

||||

}

|

||||

|

||||

impl RenderErrorBehavior {

|

||||

|

||||

@@ -16,6 +16,9 @@ pub async fn render_websocket_request<T: TemplateCallback>(

|

||||

|

||||

let mut url_parameters = Vec::new();

|

||||

for p in r.url_parameters.clone() {

|

||||

if !p.enabled {

|

||||

continue;

|

||||

}

|

||||

url_parameters.push(HttpUrlParameter {

|

||||

enabled: p.enabled,

|

||||

name: parse_and_render(&p.name, vars, cb, opt).await?,

|

||||

@@ -26,6 +29,9 @@ pub async fn render_websocket_request<T: TemplateCallback>(

|

||||

|

||||

let mut headers = Vec::new();

|

||||

for p in r.headers.clone() {

|

||||

if !p.enabled {

|

||||

continue;

|

||||

}

|

||||

headers.push(HttpRequestHeader {

|

||||

enabled: p.enabled,

|

||||

name: parse_and_render(&p.name, vars, cb, opt).await?,

|

||||

|

||||

57

flatpak/app.yaak.Yaak.metainfo.xml

Normal file

57

flatpak/app.yaak.Yaak.metainfo.xml

Normal file

@@ -0,0 +1,57 @@

|

||||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<component type="desktop-application">

|

||||

<id>app.yaak.Yaak</id>

|

||||

|

||||

<name>Yaak</name>

|

||||

<summary>An offline, Git friendly API Client</summary>

|

||||

|

||||

<developer id="app.yaak">

|

||||

<name>Yaak</name>

|

||||

</developer>

|

||||

|

||||

<metadata_license>MIT</metadata_license>

|

||||

<project_license>MIT</project_license>

|

||||

|

||||

<url type="homepage">https://yaak.app</url>

|

||||

<url type="bugtracker">https://yaak.app/feedback</url>

|

||||

<url type="contact">https://yaak.app/feedback</url>

|

||||

<url type="vcs-browser">https://github.com/mountain-loop/yaak</url>

|

||||

|

||||

<description>

|

||||

<p>

|

||||

A fast, privacy-first API client for REST, GraphQL, SSE, WebSocket,

|

||||

and gRPC — built with Tauri, Rust, and React.

|

||||

</p>

|

||||

<p>Features include:</p>

|

||||

<ul>

|

||||

<li>REST, GraphQL, SSE, WebSocket, and gRPC support</li>

|

||||

<li>Local-only data, secrets encryption, and zero telemetry</li>

|

||||

<li>Git-friendly plain-text project storage</li>

|

||||

<li>Environment variables and template functions</li>

|

||||

<li>Request chaining and dynamic values</li>

|

||||

<li>OAuth 2.0, Bearer, Basic, API Key, AWS, JWT, and NTLM authentication</li>

|

||||

<li>Import from cURL, Postman, Insomnia, and OpenAPI</li>

|

||||

<li>Extensible plugin system</li>

|

||||

</ul>

|

||||

</description>

|

||||

|

||||

<launchable type="desktop-id">app.yaak.Yaak.desktop</launchable>

|

||||

|

||||

<branding>

|

||||

<color type="primary" scheme_preference="light">#8b32ff</color>

|

||||

<color type="primary" scheme_preference="dark">#c293ff</color>

|

||||

</branding>

|

||||

|

||||

<content_rating type="oars-1.1" />

|

||||

|

||||

<screenshots>

|

||||

<screenshot type="default">

|

||||

<caption>Crafting an API request</caption>

|

||||

<image>https://assets.yaak.app/uploads/screenshot-BLG1w_2310x1326.png</image>

|

||||

</screenshot>

|

||||

</screenshots>

|

||||

|

||||

<releases>

|

||||

<release version="2026.2.0" date="2026-02-10" />

|

||||

</releases>

|

||||

</component>

|

||||

75

flatpak/fix-lockfile.mjs

Normal file

75

flatpak/fix-lockfile.mjs

Normal file

@@ -0,0 +1,75 @@

|

||||

#!/usr/bin/env node

|

||||

|

||||

// Adds missing `resolved` and `integrity` fields to npm package-lock.json.

|

||||

//

|

||||

// npm sometimes omits these fields for nested dependencies inside workspace

|

||||

// packages. This breaks offline installs and tools like flatpak-node-generator

|

||||

// that need explicit tarball URLs for every package.

|

||||

//

|

||||

// Based on https://github.com/grant-dennison/npm-package-lock-add-resolved

|

||||

// (MIT License, Copyright (c) 2024 Grant Dennison)

|

||||

|

||||

import { readFile, writeFile } from "node:fs/promises";

|

||||

import { get } from "node:https";

|

||||

|

||||

const lockfilePath = process.argv[2] || "package-lock.json";

|

||||

|

||||

function fetchJson(url) {

|

||||

return new Promise((resolve, reject) => {

|

||||

get(url, (res) => {

|

||||

let data = "";

|

||||

res.on("data", (chunk) => {

|

||||

data += chunk;

|

||||

});

|

||||

res.on("end", () => {

|

||||

if (res.statusCode === 200) {

|

||||

resolve(JSON.parse(data));

|

||||

} else {

|

||||

reject(`${url} returned ${res.statusCode} ${res.statusMessage}`);

|

||||

}

|

||||

});

|

||||

res.on("error", reject);

|

||||

}).on("error", reject);

|

||||

});

|

||||

}

|

||||

|

||||

async function fillResolved(name, p) {

|

||||

const version = p.version.replace(/^.*@/, "");

|

||||

console.log(`Retrieving metadata for ${name}@${version}`);

|

||||

const metadataUrl = `https://registry.npmjs.com/${name}/${version}`;

|

||||

const metadata = await fetchJson(metadataUrl);

|

||||

p.resolved = metadata.dist.tarball;

|

||||

p.integrity = metadata.dist.integrity;

|

||||

}

|

||||

|

||||

let changesMade = false;

|

||||

|

||||

async function fillAllResolved(packages) {

|

||||

for (const packagePath in packages) {

|

||||

if (packagePath === "") continue;

|

||||

if (!packagePath.includes("node_modules/")) continue;

|

||||

const p = packages[packagePath];

|

||||

if (p.link) continue;

|

||||

if (!p.inBundle && !p.bundled && (!p.resolved || !p.integrity)) {

|

||||

const packageName =

|

||||

p.name ||

|

||||

/^npm:(.+?)@.+$/.exec(p.version)?.[1] ||

|

||||

packagePath.replace(/^.*node_modules\/(?=.+?$)/, "");

|

||||

await fillResolved(packageName, p);

|

||||

changesMade = true;

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

const oldContents = await readFile(lockfilePath, "utf-8");

|

||||

const packageLock = JSON.parse(oldContents);

|

||||

|

||||

await fillAllResolved(packageLock.packages ?? []);

|

||||

|

||||

if (changesMade) {

|

||||

const newContents = JSON.stringify(packageLock, null, 2) + "\n";

|

||||

await writeFile(lockfilePath, newContents);

|

||||

console.log(`Updated ${lockfilePath}`);

|

||||

} else {

|

||||

console.log("No changes needed.");

|

||||

}

|

||||

48

flatpak/generate-sources.sh

Executable file

48

flatpak/generate-sources.sh

Executable file

@@ -0,0 +1,48 @@

|

||||

#!/usr/bin/env bash

|

||||

#

|

||||

# Generate offline dependency source files for Flatpak builds.

|

||||

#

|

||||

# Prerequisites:

|

||||

# pip install flatpak-node-generator tomlkit aiohttp

|

||||

# Clone https://github.com/flatpak/flatpak-builder-tools (for cargo generator)

|

||||

#

|

||||

# Usage:

|

||||

# ./flatpak/generate-sources.sh <flathub-repo-path>

|

||||

# ./flatpak/generate-sources.sh ../flathub-repo

|

||||

|

||||

set -euo pipefail

|

||||

|

||||

SCRIPT_DIR="$(cd "$(dirname "$0")" && pwd)"

|

||||

REPO_ROOT="$(cd "$SCRIPT_DIR/.." && pwd)"

|

||||

|

||||

if [ $# -lt 1 ]; then

|

||||

echo "Usage: $0 <flathub-repo-path>"

|

||||

echo "Example: $0 ../flathub-repo"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

FLATHUB_REPO="$(cd "$1" && pwd)"

|

||||

|

||||

python3 "$SCRIPT_DIR/flatpak-builder-tools/cargo/flatpak-cargo-generator.py" \

|

||||

-o "$FLATHUB_REPO/cargo-sources.json" "$REPO_ROOT/Cargo.lock"

|

||||

|

||||

TMPDIR=$(mktemp -d)

|

||||

trap 'rm -rf "$TMPDIR"' EXIT

|

||||

|

||||

cp "$REPO_ROOT/package-lock.json" "$TMPDIR/package-lock.json"

|

||||

cp "$REPO_ROOT/package.json" "$TMPDIR/package.json"

|

||||

|

||||

node "$SCRIPT_DIR/fix-lockfile.mjs" "$TMPDIR/package-lock.json"

|

||||

|

||||

node -e "

|

||||

const fs = require('fs');

|

||||

const p = process.argv[1];

|

||||

const d = JSON.parse(fs.readFileSync(p, 'utf-8'));

|

||||

for (const [name, info] of Object.entries(d.packages || {})) {

|

||||

if (name && (info.link || !info.resolved)) delete d.packages[name];

|

||||

}

|

||||

fs.writeFileSync(p, JSON.stringify(d, null, 2));

|

||||

" "$TMPDIR/package-lock.json"

|

||||

|

||||

flatpak-node-generator --no-requests-cache \

|

||||

-o "$FLATHUB_REPO/node-sources.json" npm "$TMPDIR/package-lock.json"

|

||||

86

flatpak/update-manifest.sh

Executable file

86

flatpak/update-manifest.sh

Executable file

@@ -0,0 +1,86 @@

|

||||

#!/usr/bin/env bash

|

||||

#

|

||||

# Update the Flathub repo for a new release.

|

||||

#

|

||||

# Usage:

|

||||

# ./flatpak/update-manifest.sh <version-tag> <flathub-repo-path>

|

||||

# ./flatpak/update-manifest.sh v2026.2.0 ../flathub-repo

|

||||

|

||||

set -euo pipefail

|

||||

|

||||

SCRIPT_DIR="$(cd "$(dirname "$0")" && pwd)"

|

||||

REPO_ROOT="$(cd "$SCRIPT_DIR/.." && pwd)"

|

||||

|

||||

if [ $# -lt 2 ]; then

|

||||

echo "Usage: $0 <version-tag> <flathub-repo-path>"

|

||||

echo "Example: $0 v2026.2.0 ../flathub-repo"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

VERSION_TAG="$1"

|

||||

VERSION="${VERSION_TAG#v}"

|

||||

FLATHUB_REPO="$(cd "$2" && pwd)"

|

||||

MANIFEST="$FLATHUB_REPO/app.yaak.Yaak.yml"

|

||||

METAINFO="$SCRIPT_DIR/app.yaak.Yaak.metainfo.xml"

|

||||

|

||||

if [[ "$VERSION" == *-* ]]; then

|

||||

echo "Skipping pre-release version '$VERSION_TAG' (only stable releases are published to Flathub)"

|

||||

exit 0

|

||||

fi

|

||||

|

||||

REPO="mountain-loop/yaak"

|

||||

COMMIT=$(git ls-remote "https://github.com/$REPO.git" "refs/tags/$VERSION_TAG" | cut -f1)

|

||||

|

||||

if [ -z "$COMMIT" ]; then

|

||||

echo "Error: Could not resolve commit for tag $VERSION_TAG"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

echo "Tag: $VERSION_TAG"

|

||||

echo "Commit: $COMMIT"

|

||||

|

||||

# Update git tag and commit in the manifest

|

||||

sed -i "s|tag: v.*|tag: $VERSION_TAG|" "$MANIFEST"

|

||||

sed -i "s|commit: .*|commit: $COMMIT|" "$MANIFEST"

|

||||

echo "Updated manifest tag and commit."

|

||||

|

||||

# Regenerate offline dependency sources from the tagged lockfiles

|

||||

TMPDIR=$(mktemp -d)

|

||||

trap 'rm -rf "$TMPDIR"' EXIT

|

||||

|

||||

echo "Fetching lockfiles from $VERSION_TAG..."

|

||||

curl -fsSL "https://raw.githubusercontent.com/$REPO/$VERSION_TAG/Cargo.lock" -o "$TMPDIR/Cargo.lock"

|

||||

curl -fsSL "https://raw.githubusercontent.com/$REPO/$VERSION_TAG/package-lock.json" -o "$TMPDIR/package-lock.json"

|

||||

curl -fsSL "https://raw.githubusercontent.com/$REPO/$VERSION_TAG/package.json" -o "$TMPDIR/package.json"

|

||||

|

||||

echo "Generating cargo-sources.json..."

|

||||

python3 "$SCRIPT_DIR/flatpak-builder-tools/cargo/flatpak-cargo-generator.py" \

|

||||

-o "$FLATHUB_REPO/cargo-sources.json" "$TMPDIR/Cargo.lock"

|

||||

|

||||

echo "Generating node-sources.json..."

|

||||

node "$SCRIPT_DIR/fix-lockfile.mjs" "$TMPDIR/package-lock.json"

|

||||

|

||||

node -e "

|

||||

const fs = require('fs');

|

||||

const p = process.argv[1];

|

||||

const d = JSON.parse(fs.readFileSync(p, 'utf-8'));

|

||||

for (const [name, info] of Object.entries(d.packages || {})) {

|

||||

if (name && (info.link || !info.resolved)) delete d.packages[name];

|

||||

}

|

||||

fs.writeFileSync(p, JSON.stringify(d, null, 2));

|

||||

" "$TMPDIR/package-lock.json"

|

||||

|

||||

flatpak-node-generator --no-requests-cache \

|

||||

-o "$FLATHUB_REPO/node-sources.json" npm "$TMPDIR/package-lock.json"

|

||||

|

||||

# Update metainfo with new release

|

||||

TODAY=$(date +%Y-%m-%d)

|

||||

sed -i "s| <releases>| <releases>\n <release version=\"$VERSION\" date=\"$TODAY\" />|" "$METAINFO"

|

||||

echo "Updated metainfo with release $VERSION."

|

||||

|

||||

echo ""

|

||||

echo "Done! Review the changes:"

|

||||

echo " $MANIFEST"

|

||||

echo " $METAINFO"

|

||||

echo " $FLATHUB_REPO/cargo-sources.json"

|

||||

echo " $FLATHUB_REPO/node-sources.json"

|

||||

56

package-lock.json

generated

56

package-lock.json

generated

@@ -12,7 +12,7 @@

|

||||

"packages/plugin-runtime",

|

||||

"packages/plugin-runtime-types",

|

||||

"plugins-external/mcp-server",

|

||||

"plugins-external/template-function-faker",

|

||||

"plugins-external/faker",

|

||||

"plugins-external/httpsnippet",

|

||||

"plugins/action-copy-curl",

|

||||

"plugins/action-copy-grpcurl",

|

||||

@@ -3922,13 +3922,6 @@

|

||||

"@types/react": "*"

|

||||

}

|

||||

},

|

||||

"node_modules/@types/shell-quote": {

|

||||

"version": "1.7.5",

|

||||

"resolved": "https://registry.npmjs.org/@types/shell-quote/-/shell-quote-1.7.5.tgz",

|

||||

"integrity": "sha512-+UE8GAGRPbJVQDdxi16dgadcBfQ+KG2vgZhV1+3A1XmHbmwcdwhCUwIdy+d3pAGrbvgRoVSjeI9vOWyq376Yzw==",

|

||||

"dev": true,

|

||||

"license": "MIT"

|

||||

},

|

||||

"node_modules/@types/unist": {

|

||||

"version": "3.0.3",

|

||||

"resolved": "https://registry.npmjs.org/@types/unist/-/unist-3.0.3.tgz",

|

||||

@@ -4160,6 +4153,10 @@

|

||||

"resolved": "plugins/auth-oauth2",

|

||||

"link": true

|

||||

},

|

||||

"node_modules/@yaak/faker": {

|

||||

"resolved": "plugins-external/faker",

|

||||

"link": true

|

||||

},

|

||||

"node_modules/@yaak/filter-jsonpath": {

|

||||

"resolved": "plugins/filter-jsonpath",

|

||||

"link": true

|

||||

@@ -13405,6 +13402,7 @@

|

||||

"version": "1.8.3",

|

||||

"resolved": "https://registry.npmjs.org/shell-quote/-/shell-quote-1.8.3.tgz",

|

||||

"integrity": "sha512-ObmnIF4hXNg1BqhnHmgbDETF8dLPCggZWBjkQfhZpbszZnYur5DUljTcCHii5LC3J5E0yeO/1LIMyH+UvHQgyw==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"engines": {

|

||||

"node": ">= 0.4"

|

||||

@@ -13413,6 +13411,12 @@

|

||||

"url": "https://github.com/sponsors/ljharb"

|

||||

}

|

||||

},

|

||||

"node_modules/shlex": {

|

||||

"version": "3.0.0",

|

||||

"resolved": "https://registry.npmjs.org/shlex/-/shlex-3.0.0.tgz",

|

||||

"integrity": "sha512-jHPXQQk9d/QXCvJuLPYMOYWez3c43sORAgcIEoV7bFv5AJSJRAOyw5lQO12PMfd385qiLRCaDt7OtEzgrIGZUA==",

|

||||

"license": "MIT"

|

||||

},

|

||||

"node_modules/should": {

|

||||

"version": "13.2.3",

|

||||

"resolved": "https://registry.npmjs.org/should/-/should-13.2.3.tgz",

|

||||

@@ -15953,9 +15957,36 @@

|

||||

"undici-types": "~7.16.0"

|

||||

}

|

||||

},

|

||||

"plugins-external/faker": {

|

||||

"name": "@yaak/faker",

|

||||

"version": "1.1.1",

|

||||

"dependencies": {

|

||||

"@faker-js/faker": "^10.1.0"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@types/node": "^25.0.3",

|

||||

"typescript": "^5.9.3"

|

||||

}

|

||||

},

|

||||

"plugins-external/faker/node_modules/@faker-js/faker": {

|

||||

"version": "10.3.0",

|

||||

"resolved": "https://registry.npmjs.org/@faker-js/faker/-/faker-10.3.0.tgz",

|

||||

"integrity": "sha512-It0Sne6P3szg7JIi6CgKbvTZoMjxBZhcv91ZrqrNuaZQfB5WoqYYbzCUOq89YR+VY8juY9M1vDWmDDa2TzfXCw==",

|

||||

"funding": [

|

||||

{

|

||||

"type": "opencollective",

|

||||

"url": "https://opencollective.com/fakerjs"

|

||||

}

|

||||

],

|

||||

"license": "MIT",

|

||||

"engines": {

|

||||

"node": "^20.19.0 || ^22.13.0 || ^23.5.0 || >=24.0.0",

|

||||

"npm": ">=10"

|

||||

}

|

||||

},

|

||||

"plugins-external/httpsnippet": {

|

||||

"name": "@yaak/httpsnippet",

|

||||

"version": "1.0.0",

|

||||

"version": "1.0.3",

|

||||

"dependencies": {

|

||||

"@readme/httpsnippet": "^11.0.0"

|

||||

},

|

||||

@@ -15983,7 +16014,7 @@

|

||||

},

|

||||

"plugins-external/mcp-server": {

|

||||

"name": "@yaak/mcp-server",

|

||||

"version": "0.1.7",

|

||||

"version": "0.2.1",

|

||||

"dependencies": {

|

||||

"@hono/mcp": "^0.2.3",

|

||||

"@hono/node-server": "^1.19.7",

|

||||

@@ -16080,10 +16111,7 @@

|

||||

"name": "@yaak/importer-curl",

|

||||

"version": "0.1.0",

|

||||

"dependencies": {

|

||||

"shell-quote": "^1.8.1"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@types/shell-quote": "^1.7.5"

|

||||

"shlex": "^3.0.0"

|

||||

}

|

||||

},

|

||||

"plugins/importer-insomnia": {

|

||||

|

||||

@@ -11,7 +11,7 @@

|

||||

"packages/plugin-runtime",

|

||||

"packages/plugin-runtime-types",

|

||||

"plugins-external/mcp-server",

|

||||

"plugins-external/template-function-faker",

|

||||

"plugins-external/faker",

|

||||

"plugins-external/httpsnippet",

|

||||

"plugins/action-copy-curl",

|

||||

"plugins/action-copy-grpcurl",

|

||||

|

||||

233

plugins-external/faker/tests/__snapshots__/init.test.ts.snap

Normal file

233

plugins-external/faker/tests/__snapshots__/init.test.ts.snap

Normal file

@@ -0,0 +1,233 @@

|

||||

// Vitest Snapshot v1, https://vitest.dev/guide/snapshot.html

|

||||

|

||||

exports[`template-function-faker > exports all expected template functions 1`] = `

|

||||

[

|

||||

"faker.airline.aircraftType",

|

||||

"faker.airline.airline",

|

||||

"faker.airline.airplane",

|

||||

"faker.airline.airport",

|

||||

"faker.airline.flightNumber",

|

||||

"faker.airline.recordLocator",

|

||||

"faker.airline.seat",

|

||||

"faker.animal.bear",

|

||||

"faker.animal.bird",

|

||||

"faker.animal.cat",

|

||||

"faker.animal.cetacean",

|

||||

"faker.animal.cow",

|

||||

"faker.animal.crocodilia",

|

||||

"faker.animal.dog",

|

||||

"faker.animal.fish",

|

||||

"faker.animal.horse",

|

||||

"faker.animal.insect",

|

||||

"faker.animal.lion",

|

||||

"faker.animal.petName",

|

||||

"faker.animal.rabbit",

|

||||

"faker.animal.rodent",

|

||||

"faker.animal.snake",

|

||||

"faker.animal.type",

|

||||

"faker.color.cmyk",

|

||||

"faker.color.colorByCSSColorSpace",

|

||||

"faker.color.cssSupportedFunction",

|

||||

"faker.color.cssSupportedSpace",

|

||||

"faker.color.hsl",

|

||||

"faker.color.human",

|

||||

"faker.color.hwb",

|

||||

"faker.color.lab",

|

||||

"faker.color.lch",

|

||||

"faker.color.rgb",

|

||||

"faker.color.space",

|

||||

"faker.commerce.department",

|

||||

"faker.commerce.isbn",

|

||||

"faker.commerce.price",

|

||||

"faker.commerce.product",

|

||||

"faker.commerce.productAdjective",

|

||||

"faker.commerce.productDescription",

|

||||

"faker.commerce.productMaterial",

|

||||

"faker.commerce.productName",

|

||||

"faker.commerce.upc",

|

||||

"faker.company.buzzAdjective",

|

||||

"faker.company.buzzNoun",

|

||||

"faker.company.buzzPhrase",

|

||||

"faker.company.buzzVerb",

|

||||

"faker.company.catchPhrase",

|

||||

"faker.company.catchPhraseAdjective",

|

||||

"faker.company.catchPhraseDescriptor",

|

||||

"faker.company.catchPhraseNoun",

|

||||

"faker.company.name",

|

||||

"faker.database.collation",

|

||||

"faker.database.column",

|

||||

"faker.database.engine",

|

||||

"faker.database.mongodbObjectId",

|

||||

"faker.database.type",

|

||||

"faker.date.anytime",

|

||||

"faker.date.between",

|

||||

"faker.date.betweens",

|

||||

"faker.date.birthdate",

|

||||

"faker.date.future",

|

||||

"faker.date.month",

|

||||

"faker.date.past",

|

||||

"faker.date.recent",

|

||||

"faker.date.soon",

|

||||

"faker.date.timeZone",

|

||||

"faker.date.weekday",

|

||||

"faker.finance.accountName",

|

||||

"faker.finance.accountNumber",

|

||||

"faker.finance.amount",

|

||||

"faker.finance.bic",

|

||||

"faker.finance.bitcoinAddress",

|

||||

"faker.finance.creditCardCVV",

|

||||

"faker.finance.creditCardIssuer",

|

||||

"faker.finance.creditCardNumber",

|

||||

"faker.finance.currency",

|

||||

"faker.finance.currencyCode",

|

||||

"faker.finance.currencyName",

|

||||

"faker.finance.currencyNumericCode",

|

||||

"faker.finance.currencySymbol",

|

||||

"faker.finance.ethereumAddress",

|

||||

"faker.finance.iban",

|

||||

"faker.finance.litecoinAddress",

|

||||

"faker.finance.pin",

|

||||

"faker.finance.routingNumber",

|

||||

"faker.finance.transactionDescription",

|

||||

"faker.finance.transactionType",

|

||||

"faker.git.branch",

|

||||

"faker.git.commitDate",

|

||||

"faker.git.commitEntry",

|

||||

"faker.git.commitMessage",

|

||||

"faker.git.commitSha",

|

||||

"faker.hacker.abbreviation",

|

||||

"faker.hacker.adjective",

|

||||

"faker.hacker.ingverb",

|

||||

"faker.hacker.noun",

|

||||

"faker.hacker.phrase",

|

||||

"faker.hacker.verb",

|

||||

"faker.image.avatar",

|

||||

"faker.image.avatarGitHub",

|

||||

"faker.image.dataUri",

|

||||

"faker.image.personPortrait",

|

||||

"faker.image.url",

|

||||

"faker.image.urlLoremFlickr",

|

||||

"faker.image.urlPicsumPhotos",

|

||||

"faker.internet.displayName",

|

||||

"faker.internet.domainName",

|

||||

"faker.internet.domainSuffix",

|

||||

"faker.internet.domainWord",

|

||||

"faker.internet.email",

|

||||

"faker.internet.emoji",

|

||||

"faker.internet.exampleEmail",

|

||||

"faker.internet.httpMethod",

|

||||

"faker.internet.httpStatusCode",

|

||||

"faker.internet.ip",

|

||||

"faker.internet.ipv4",

|

||||

"faker.internet.ipv6",

|

||||

"faker.internet.jwt",

|

||||

"faker.internet.jwtAlgorithm",

|

||||

"faker.internet.mac",

|

||||

"faker.internet.password",

|

||||

"faker.internet.port",

|

||||

"faker.internet.protocol",

|

||||

"faker.internet.url",

|

||||

"faker.internet.userAgent",

|

||||

"faker.internet.username",

|

||||

"faker.location.buildingNumber",

|

||||

"faker.location.cardinalDirection",

|

||||

"faker.location.city",

|

||||

"faker.location.continent",

|

||||

"faker.location.country",

|

||||

"faker.location.countryCode",

|

||||

"faker.location.county",

|

||||

"faker.location.direction",

|

||||

"faker.location.language",

|

||||

"faker.location.latitude",

|

||||

"faker.location.longitude",

|

||||

"faker.location.nearbyGPSCoordinate",

|

||||

"faker.location.ordinalDirection",

|

||||

"faker.location.secondaryAddress",

|

||||

"faker.location.state",

|

||||

"faker.location.street",

|

||||

"faker.location.streetAddress",

|

||||

"faker.location.timeZone",

|

||||

"faker.location.zipCode",

|

||||

"faker.lorem.lines",

|

||||

"faker.lorem.paragraph",

|

||||

"faker.lorem.paragraphs",

|

||||

"faker.lorem.sentence",

|

||||

"faker.lorem.sentences",

|

||||

"faker.lorem.slug",

|

||||

"faker.lorem.text",

|

||||

"faker.lorem.word",

|

||||

"faker.lorem.words",

|

||||

"faker.music.album",

|

||||

"faker.music.artist",

|

||||

"faker.music.genre",

|

||||

"faker.music.songName",

|

||||

"faker.number.bigInt",

|

||||

"faker.number.binary",

|

||||

"faker.number.float",

|

||||

"faker.number.hex",

|

||||

"faker.number.int",

|

||||

"faker.number.octal",

|

||||

"faker.number.romanNumeral",

|

||||

"faker.person.bio",

|

||||

"faker.person.firstName",

|

||||

"faker.person.fullName",

|

||||

"faker.person.gender",

|

||||

"faker.person.jobArea",

|

||||

"faker.person.jobDescriptor",

|

||||

"faker.person.jobTitle",

|

||||

"faker.person.jobType",

|

||||

"faker.person.lastName",

|

||||

"faker.person.middleName",

|

||||

"faker.person.prefix",

|

||||

"faker.person.sex",

|

||||

"faker.person.sexType",

|

||||

"faker.person.suffix",

|

||||

"faker.person.zodiacSign",

|

||||

"faker.phone.imei",

|

||||

"faker.phone.number",

|

||||

"faker.science.chemicalElement",

|

||||

"faker.science.unit",

|

||||

"faker.string.alpha",

|

||||

"faker.string.alphanumeric",

|

||||

"faker.string.binary",

|

||||

"faker.string.fromCharacters",

|

||||

"faker.string.hexadecimal",

|

||||

"faker.string.nanoid",

|

||||

"faker.string.numeric",

|

||||

"faker.string.octal",

|

||||

"faker.string.sample",

|

||||

"faker.string.symbol",

|

||||

"faker.string.ulid",

|

||||

"faker.string.uuid",

|

||||

"faker.system.commonFileExt",

|

||||

"faker.system.commonFileName",

|

||||

"faker.system.commonFileType",

|

||||

"faker.system.cron",

|

||||

"faker.system.directoryPath",

|

||||

"faker.system.fileExt",

|

||||

"faker.system.fileName",

|

||||

"faker.system.filePath",

|

||||

"faker.system.fileType",

|

||||

"faker.system.mimeType",

|

||||

"faker.system.networkInterface",

|

||||

"faker.system.semver",

|

||||

"faker.vehicle.bicycle",

|

||||

"faker.vehicle.color",

|

||||

"faker.vehicle.fuel",

|

||||

"faker.vehicle.manufacturer",

|

||||

"faker.vehicle.model",

|

||||

"faker.vehicle.type",

|

||||

"faker.vehicle.vehicle",

|

||||

"faker.vehicle.vin",

|

||||

"faker.vehicle.vrm",

|

||||

"faker.word.adjective",

|

||||

"faker.word.adverb",

|

||||

"faker.word.conjunction",

|

||||

"faker.word.interjection",

|

||||

"faker.word.noun",

|

||||

"faker.word.preposition",

|

||||

"faker.word.sample",

|

||||

"faker.word.verb",

|

||||

"faker.word.words",

|

||||

]

|

||||

`;

|

||||

@@ -1,9 +1,12 @@

|

||||

import { describe, expect, it } from 'vitest';

|

||||

|

||||

describe('formatDatetime', () => {

|

||||

it('returns formatted current date', async () => {

|

||||

// Ensure the plugin imports properly

|

||||

const faker = await import('../src/index');

|

||||

expect(faker.plugin.templateFunctions?.length).toBe(226);

|

||||

describe('template-function-faker', () => {

|

||||

it('exports all expected template functions', async () => {

|

||||

const { plugin } = await import('../src/index');

|

||||

const names = plugin.templateFunctions?.map((fn) => fn.name).sort() ?? [];

|

||||

|

||||

// Snapshot the full list of exported function names so we catch any

|

||||

// accidental additions, removals, or renames across faker upgrades.

|

||||

expect(names).toMatchSnapshot();

|

||||

});

|

||||

});

|

||||

|

||||

@@ -1,9 +1,45 @@

|

||||

# Yaak HTTP Snippet Plugin

|

||||

|

||||

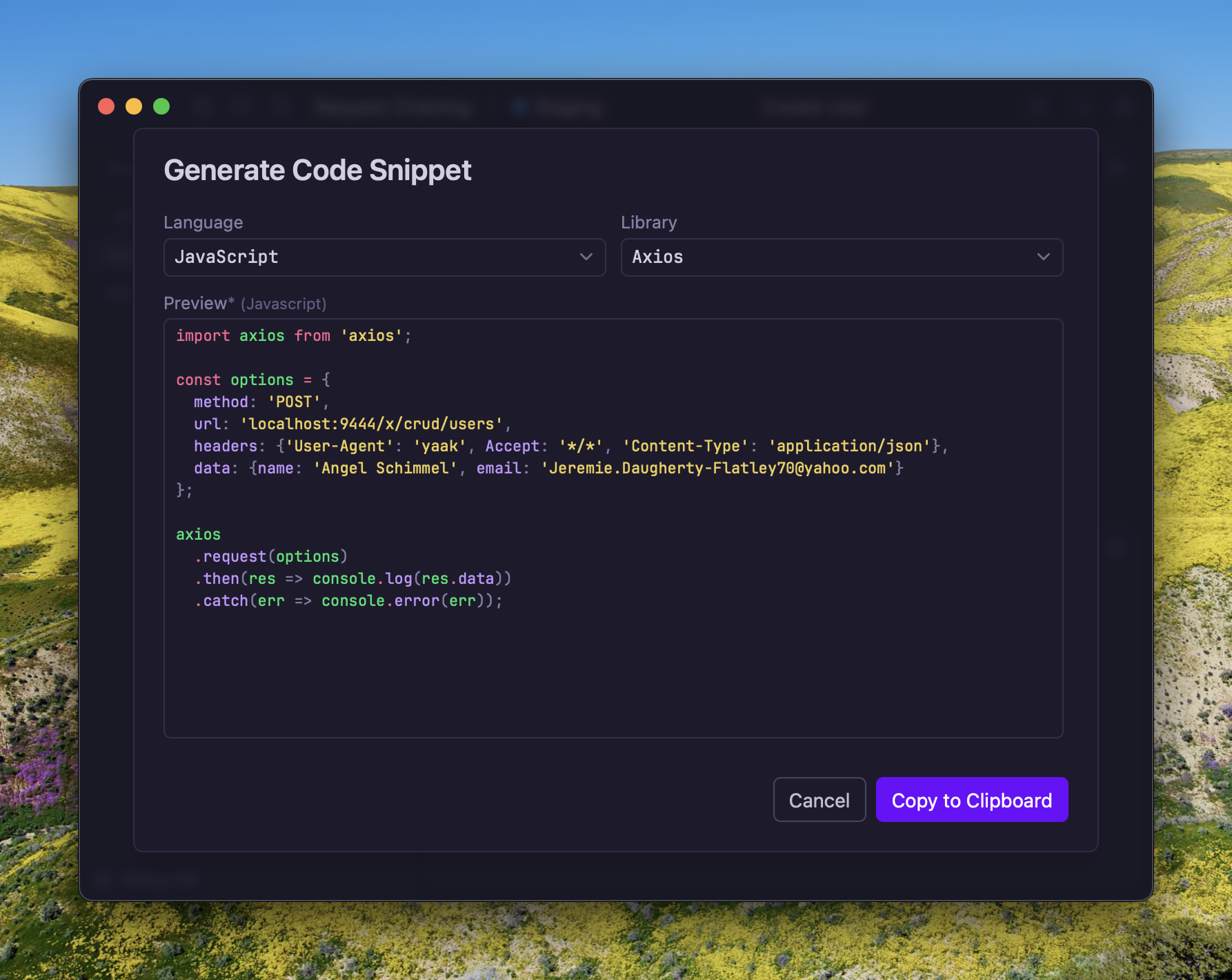

Generate code snippets for HTTP requests in various languages and frameworks,

|

||||

powered by [httpsnippet](https://github.com/Kong/httpsnippet).

|

||||

powered by [@readme/httpsnippet](https://github.com/readmeio/httpsnippet).

|

||||

|

||||

|

||||

|

||||

## How It Works

|

||||

|

||||

Right-click any HTTP request (or use the `...` menu) and select **Generate Code Snippet**.

|

||||

A dialog lets you pick a language and library, with a live preview of the generated code.

|

||||

Click **Copy to Clipboard** to copy the snippet. Your language and library selections are

|

||||

remembered for next time.

|

||||

|

||||

## Supported Languages

|

||||

|

||||

C, Clojure, C#, Go, HTTP, Java, JavaScript, Kotlin, Node.js, Objective-C,

|

||||

OCaml, PHP, PowerShell, Python, R, Ruby, Shell, and Swift.

|

||||

Each language supports one or more libraries:

|

||||

|

||||

| Language | Libraries |

|

||||

|---|---|

|

||||

| C | libcurl |

|

||||

| Clojure | clj-http |

|

||||

| C# | HttpClient, RestSharp |

|

||||

| Go | Native |

|

||||

| HTTP | HTTP/1.1 |

|

||||

| Java | AsyncHttp, NetHttp, OkHttp, Unirest |

|

||||

| JavaScript | Axios, fetch, jQuery, XHR |

|

||||

| Kotlin | OkHttp |

|

||||

| Node.js | Axios, fetch, HTTP, Request, Unirest |

|

||||

| Objective-C | NSURLSession |

|

||||

| OCaml | CoHTTP |

|

||||

| PHP | cURL, Guzzle, HTTP v1, HTTP v2 |

|

||||

| PowerShell | Invoke-WebRequest, RestMethod |

|

||||

| Python | http.client, Requests |

|

||||

| R | httr |

|

||||

| Ruby | Native |

|

||||

| Shell | cURL, HTTPie, Wget |

|

||||

| Swift | URLSession |

|

||||

|

||||

## Features

|

||||

|

||||

- Renders template variables before generating snippets, so the output reflects real values

|

||||

- Supports all body types: JSON, form-urlencoded, multipart, GraphQL, and raw text

|

||||

- Includes authentication headers (Basic, Bearer, and API Key)

|

||||

- Includes query parameters and custom headers

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

{

|

||||

"name": "@yaak/httpsnippet",

|

||||

"private": true,

|

||||

"version": "1.0.1",

|

||||

"version": "1.0.3",

|

||||

"displayName": "HTTP Snippet",

|

||||

"description": "Generate code snippets for HTTP requests in various languages and frameworks",

|

||||

"minYaakVersion": "2026.2.0-beta.10",

|

||||

|

||||

@@ -10,9 +10,6 @@

|

||||

"test": "vitest --run tests"

|

||||

},

|

||||

"dependencies": {

|

||||

"shell-quote": "^1.8.1"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@types/shell-quote": "^1.7.5"

|

||||

"shlex": "^3.0.0"

|

||||

}

|

||||

}

|

||||

|

||||

@@ -7,8 +7,7 @@ import type {

|

||||

PluginDefinition,

|

||||

Workspace,

|

||||

} from '@yaakapp/api';

|

||||

import type { ControlOperator, ParseEntry } from 'shell-quote';

|

||||

import { parse } from 'shell-quote';

|

||||

import { split } from 'shlex';

|

||||

|

||||

type AtLeast<T, K extends keyof T> = Partial<T> & Pick<T, K>;

|

||||

|

||||

@@ -56,31 +55,89 @@ export const plugin: PluginDefinition = {

|

||||

};

|

||||

|

||||

/**

|

||||

* Decodes escape sequences in shell $'...' strings

|

||||

* Handles Unicode escape sequences (\uXXXX) and common escape codes

|

||||

* Splits raw input into individual shell command strings.

|

||||

* Handles line continuations, semicolons, and newline-separated curl commands.

|

||||

*/

|

||||

function decodeShellString(str: string): string {

|

||||

return str

|

||||

.replace(/\\u([0-9a-fA-F]{4})/g, (_, hex) => String.fromCharCode(parseInt(hex, 16)))

|

||||

.replace(/\\x([0-9a-fA-F]{2})/g, (_, hex) => String.fromCharCode(parseInt(hex, 16)))

|

||||

.replace(/\\n/g, '\n')

|

||||

.replace(/\\r/g, '\r')

|

||||

.replace(/\\t/g, '\t')

|

||||

.replace(/\\'/g, "'")

|

||||

.replace(/\\"/g, '"')

|

||||

.replace(/\\\\/g, '\\');

|

||||

}

|

||||

function splitCommands(rawData: string): string[] {

|

||||

// Join line continuations (backslash-newline, and backslash-CRLF for Windows)

|

||||

const joined = rawData.replace(/\\\r?\n/g, ' ');

|

||||

|

||||

/**

|

||||

* Checks if a string might contain escape sequences that need decoding

|

||||

* If so, decodes them; otherwise returns the string as-is

|

||||

*/

|

||||

function maybeDecodeEscapeSequences(str: string): string {

|

||||

// Check if the string contains escape sequences that shell-quote might not handle

|

||||

if (str.includes('\\u') || str.includes('\\x')) {

|

||||

return decodeShellString(str);

|

||||

// Count consecutive backslashes immediately before position i.

|

||||

// An even count means the quote at i is NOT escaped; odd means it IS escaped.

|

||||

function isEscaped(i: number): boolean {

|

||||

let backslashes = 0;

|

||||

let j = i - 1;

|

||||

while (j >= 0 && joined[j] === '\\') {

|

||||

backslashes++;

|

||||

j--;

|

||||

}

|

||||

return backslashes % 2 !== 0;

|

||||

}

|

||||

return str;

|

||||

|

||||

// Split on semicolons and newlines to separate commands

|

||||

const commands: string[] = [];

|

||||

let current = '';

|

||||

let inSingleQuote = false;

|

||||

let inDoubleQuote = false;

|

||||

let inDollarQuote = false;

|

||||

|

||||

for (let i = 0; i < joined.length; i++) {

|

||||

const ch = joined[i]!;

|

||||

const next = joined[i + 1];

|

||||

|

||||

// Track quoting state to avoid splitting inside quoted strings

|

||||

if (!inDoubleQuote && !inDollarQuote && ch === "'" && !inSingleQuote) {

|

||||

inSingleQuote = true;

|

||||

current += ch;

|

||||

continue;

|

||||

}

|

||||

if (inSingleQuote && ch === "'") {

|

||||

inSingleQuote = false;

|

||||

current += ch;

|

||||

continue;

|

||||

}

|

||||

if (!inSingleQuote && !inDollarQuote && ch === '"' && !inDoubleQuote) {

|

||||

inDoubleQuote = true;

|

||||

current += ch;

|

||||

continue;

|

||||

}

|

||||

if (inDoubleQuote && ch === '"' && !isEscaped(i)) {

|

||||

inDoubleQuote = false;

|

||||

current += ch;

|

||||

continue;

|

||||

}

|

||||

if (!inSingleQuote && !inDoubleQuote && !inDollarQuote && ch === '$' && next === "'") {

|

||||

inDollarQuote = true;

|

||||

current += ch + next;

|

||||

i++; // Skip the opening quote

|

||||

continue;

|

||||

}

|

||||

if (inDollarQuote && ch === "'" && !isEscaped(i)) {

|

||||

inDollarQuote = false;

|

||||

current += ch;